AGI is here - Part 2

See the original article here. The argument was simple: measured against eight prominent definitions, AGI is already here. Which is precisely the problem - if everything qualifies in some way, the definitions aren't measuring anything, and the real question shifts from "have we arrived?" to "what are we actually talking about?"

The article received more discourse than I expected - especially for a first blog and Hacker News submission. People evidently have strong opinions and no consensus.

Hacker News thread on the article

Most readers engaged in good faith and the discussion was genuinely interesting. A few themes came up repeatedly.

The definitions debate went nowhere

Many readers pushed back on specific definitions as weak or overdone - one comment called it "vapid blog posts having another thing to debate."

I agree - these definitions are vague and overused. The proof is in the discourse - we had 50+ comments of definition debate. Not only that, the post was also flagged on Hacker News - initially as rage bait - then de-flagged manually by an admin once it was clear the discussion was genuine. Very dramatic to say the least.

What we have now deserves its own name

In the midst of all the debate, something interesting did emerge - we need new terminology.

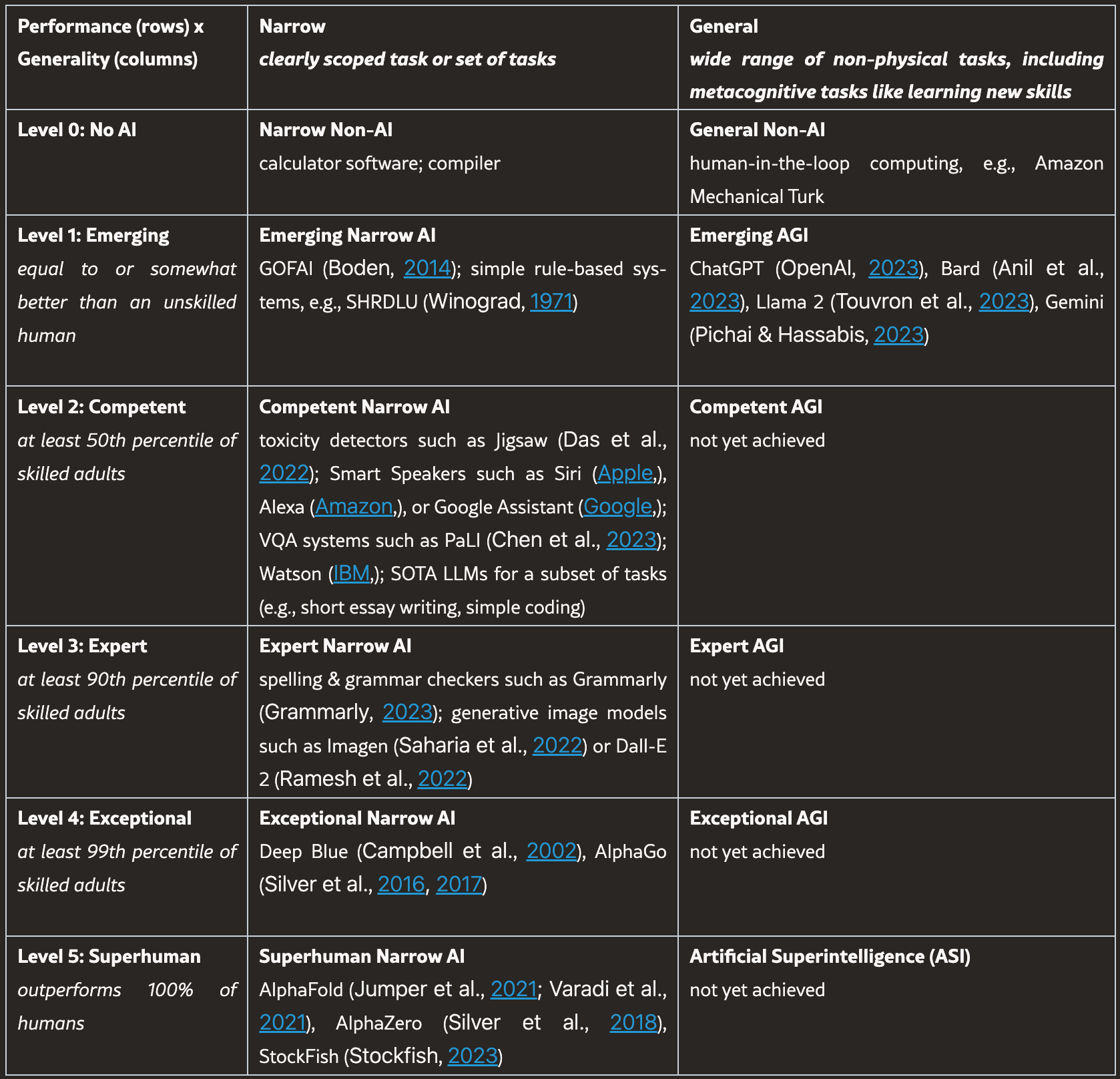

The Google DeepMind levels of AGI paper cited in the original blog is on the right track: replace the AGI binary with a levels framework so you can make graded, falsifiable claims about capability.

The problem is the buckets are too coarse. GPT-2 and GPT-5 both fall into Emerging AGI - the lowest rung - which tells you nothing about the gap between them. That gap is the entire story: one model produces mildly plausible text, the other is usably composable with scaffolding we build around it - the orchestration layer: tool calling, memory, planning, sub-agents, etc.

What we have today is meaningfully different from what existed when GPT first launched, or even when GPT-3.5 Turbo was the frontier in 2023. A framework that can't distinguish between them hasn't solved the terminology problem - it's just renamed it. The lower end needs finer resolution, because that's where most of the meaningful progress in the last five years has actually happened.

Frontier models can draw logical relationships, give curated answers, and effectively use tools while staying coherent. Not perfect, but a genuine productivity multiplier compared to what came before.

This is not to say the technology is now perfect. I use AI daily to speed up my development work. It handles ambiguity reasonably well and produces coherent output most of the time. It also breaks from time to time - it is confident while being evidently wrong, it becomes delirious the longer the session, and sometimes it will randomly go off the rails. The difference is, in its current form, these random blips are now outweighed by the general capability of the technology.

These aren't glamorous capabilities either: reliable tool calling, consistency in structured output, and coherence across a session, but they're what makes a model actually composable with scaffolding - you can build something on top of it that works for a majority of the time. We have jumped from no capability to moderately-consistent usable capability - none of this was possible with GPT-3.5 Turbo - it deserves new terminology.

Where do we go from here?

The above is a real and useful threshold - but it is not the end of the road. There are things current models genuinely lack. Some are capability gaps - architectural limitations that better models or scaffolding might close. Others are a different kind of problem entirely - questions about what a system is, not just what it can do. To name a few:

Time

A model has no experience of duration - each session starts fresh, with no sense of how long ago anything happened. A context window is ordered text, not lived time. There's no impatience, no anticipation, no urgency. Timestamps can be fed in as data, but that's not the same as experiencing time or understanding the immovability of time, or the stakes it presents.

Intuition

A model operating on explicit rules and stated goals has no implicit feel for context that wasn't given to it.

A human wouldn't need every situation spelled out - they'd read the room. An AI scheduling assistant will book a 6am meeting the morning after a red-eye flight because the calendar shows it free - not because it's broken, but because nothing in its rules said not to.

You can add that rule. And the next one, and the one after that. But the space of implicit human context isn't a list you can finish - it's the accumulated weight of living inside a shared social and physical reality.

A human assistant doesn't need the rules enumerated because they already carry that context. The model doesn't, and no amount of prompt engineering closes an open-ended set. This may narrow as models scale - the same argument was made about chess and driving, and models exceeded human performance in both. Whether implicit social context is the same kind of problem remains to be seen.

Consciousness

The above two gaps are at least in principle closeable. This one is different - it's not a capability question but an ontological one. You can benchmark tool use and structured output, but you can't benchmark how a system internally "feels".

A model can produce the right answer, express the right emotion, and behave as if it has goals. But is there anything it's like to "be" that model?

We assume other people are conscious because they're like us. We don't have access to anyone else's inner experience - we infer it from similarity. A model that speaks fluently, reasons across domains, and expresses something that looks like uncertainty or curiosity is increasingly similar to us in its outputs. At what point would it be similar enough that we'd extend it the same assumption?

There's no clean answer - because the assumption was never principled to begin with. We extend consciousness to other humans by analogy, not by proof. Models just make it more visible.

AGI is the wrong term

The fact that AGI can mean anything from "fooled a human in a text box" to "achieved consciousness" - tells you everything about why the term is broken. That range is not a spectrum, it's a category error.

Current frontier models with scaffolding are not the same thing as what came before. Future models may cross the thresholds above and then some.

Conversation and regulation depend on being able to say precisely what kind of system you're talking about. "AGI" doesn't do that. The field needs agreed gradations with enough resolution to distinguish what we had before, what we have now, and what may come next. Until we have it, every conversation about whether AI has "arrived" will keep talking past itself.